How To Handle Youtube Site With Selenium

This article was submitted equally role of Analytics Vidhya's Internship Challenge.

Introduction

I'one thousand an avid YouTube user. The sheer amount of content I can watch on a single platform is staggering. In fact, a lot of my data scientific discipline learning has happened through YouTube videos!

So, I was browsing YouTube a few weeks ago searching for a sure category to watch. That'due south when my data scientist thought process kicked in. Given my love for web scraping and auto learning, could I excerpt data well-nigh YouTube videos and build a model to allocate them into their respective categories?

I was intrigued! This sounded like the perfect opportunity to combine my existing Python and data science knowledge with my curiosity to learn something new. And Analytics Vidhya'south internship challenge offered me the hazard to pen downwardly my learning in article form.

Web scraping is a skill I feel every data scientific discipline enthusiast should know. Information technology is immensely helpful when we're looking for data for our project or want to analyze specific data present but on a website. Keep in listen though, web scraping should not cross upstanding and legal boundaries.

In this article, we'll acquire how to use web scraping to extract YouTube video data using Selenium and Python. Nosotros will then use the NLTK library to make clean the data so build a model to allocate these videos based on specific categories.

You tin also check out the below tutorials on spider web scraping using different libraries:

- Beginner'southward guide to Web Scraping in Python (using BeautifulSoup)

- Web Scraping in Python using Scrapy (with multiple examples)

- Beginner's Guide on Web Scraping in R (using remainder)

Annotation: BeautifulSoup is some other library for web scraping. You can learn almost this using our free course- Introduction to Web Scraping using Python.

Table of Contents

- Overview of Selenium

- Prerequisites for our Web Scraping Project

- Setting upwardly the Python Environs

- Scraping Data from YouTube

- Cleaning the Scraped Data using the NLTK Library

- Building our Model to Classify YouTube Videos

- Analyzing the Results

Overview of Selenium

Selenium is a popular tool for automating browsers. Information technology's primarily used for testing in the manufacture but is also very handy for spider web scraping. You must have come across Selenium if y'all've worked in the Information technology field.

We can easily program a Python script to automate a web browser using Selenium. It gives us the liberty we demand to efficiently excerpt the data and shop information technology in our preferred format for future use.

Selenium requires a driver to interface with our chosen browser. Chrome, for example, requires ChromeDriver, which needs to be installed earlier nosotros start scraping. The Selenium spider web driver speaks directly to the browser using the browser's own engine to control information technology. This makes it incredibly fast.

Prerequisites for our Web Scraping Project

In that location are a few things we must know before jumping into spider web scraping:

- Basic cognition of HTML and CSS is a must. We need this to understand the construction of a webpage we're near to scrape

- Python is required to clean the data, explore it, and build models

- Knowledge of some basic libraries like PandasandNumPy would be the cherry on the cake

Setting upward the Python Surround

Time to power up your favorite Python IDE (that's Jupyter notebooks for me)! Let's get our hands dirty and start coding.

Stride one: Install Python bounden:

#Open terminal and type- $ pip install selenium Step ii: Download Chrome WebDriver:

- Visit https://sites.google.com/a/chromium.org/chromedriver/download

- Select the compatible commuter for your Chrome version

- To check the Chrome version you are using, click on the three vertical dots on the top correct corner

- Then become to Help -> About Google Chrome

Step 3: Move the driver file to a PATH:

Become to the downloads directory, unzip the file, and move it to usr/local/bin PATH.

$ cd Downloads $ unzip chromedriver_linux64.aught $ mv chromedriver /usr/local/bin/

We're all set to brainstorm web scraping now.

Scraping Information from YouTube

In this article, we'll be scraping the video ID, video title, and video description of a particular category from YouTube. The categories nosotros'll be scraping are:

- Travel

- Science

- Food

- History

- Manufacturing

- Art & Dance

So let's begin!

- Kickoff, let's import some libraries:

- Earlier we do annihilation else, open up YouTube in your browser. Type in the category yous want to search videos for and ready the filter to "videos". This volition brandish only the videos related to your search. Copy the URL after doing this.

- Side by side, we demand to prepare upward the driver to fetch the content of the URL from YouTube:

- Paste the link into to driver.go(" Your Link Here ") function and run the cell. This will open a new browser window for that link. We will practise all the following tasks in this browser window

- Fetch all the video links present on that particular page. We will create a "listing" to store those links

- Now, go to the browser window, right-click on the page, and select 'inspect element'

- Search for the anchor tag with id = "video-championship" so right-click on it -> Copy -> XPath. The XPath should look something like : //*[@id="video-championship"]

With me so far? Now, write the beneath code to kickoff fetching the links from the page and run the cell. This should fetch all the links present on the spider web page and store it in a list.

Note: Traverse all the way down to load all the videos on that folio.

The higher up code will fetch the "href" attribute of the anchor tag we searched for.

At present, we demand to create a dataframe with iv columns – " link ", " championship ", " description ", and " category ". We will store the details of videos for unlike categories in these columns:

We are all prepare to scrape the video details from YouTube. Here'due south the Python code to practise it:

Let's breakdown this code block to understand what we simply did:

- "wait" will ignore instances of NotFoundException that are encountered (thrown) by default in the 'until' condition. It will immediately propagate all others

- Parameters:

- commuter: The WebDriver instance to laissez passer to the expected conditions

- timeOutInSeconds: The timeout in seconds when an expectation is called

- v_category stores the video category name nosotros searched for before

- The "for" loop is practical on the list of links we created higher up

- driver.get(10) traverses through all the links one-by-one and opens them in the browser to fetch the details

- v_id stores the stripped video ID from the link

- v_title stores the video title fetched by using the CSS path

- Similarly, v_description stores the video clarification by using the CSS path

During each iteration, our code saves the extracted data within the dataframe we created earlier.

We have to follow the same steps for the remaining five categories. We should have vi different dataframes once we are done with this. Now, it's time to merge them together into a single dataframe:

Voila! We have our final dataframe containing all the desired details of a video from all the categories mentioned above.

Cleaning the Scraped Data using the NLTK Library

In this department, we'll employ the pop NLTK library to clean the data present in the "title" and "description" columns. NLP enthusiasts will love this section!

Before we start cleaning the data, we need to store all the columns separately and then that we can perform different operations chop-chop and easily:

Import the required libraries first:

Now, create a list in which we tin store our cleaned data. We will store this data in a dataframe later. Write the post-obit code to create a listing and do some data cleaning on the "title" column from df_title :

Did yous meet what we did here? We removed all the punctuation from the titles and only kept the English language root words. Later all these iterations, we are set up with our listing total of information.

We need to follow the same steps to clean the "clarification" cavalcade from df_description:

Note: The range is selected as per the rows in our dataset.

At present, convert these lists into dataframes:

Next, we demand to label encode the categories. The "LabelEncoder()" office encodes labels with a value betwixt 0 and n_classes – 1 where northward is the number of distinct labels.

Here, nosotros have applied characterization encoding on df_category and stored the result into dfcategory. We can store our cleaned and encoded information in into a new dataframe:

We're not quite all the mode washed with our cleaning and transformation role.

We should create a purse-of-words so that our model can sympathize the keywords from that purse to allocate videos accordingly. Here's the code to do create a pocketbook-of-words:

Notation: Hither, we created 1500 features from information stored in the lists – corpus and corpus1. "X" stores all the features and "y" stores our encoded data.

Nosotros are all set for the virtually anticipated role of a data scientist's role – model building!

Edifice our Model to Allocate YouTube Videos

Before we build our model, nosotros demand to separate the data into training set and test gear up:

- Preparation set up: A subset of the information to train our model

- Exam fix: Contains the remaining informationto test the trained model

Make certain that your test set meets the following two conditions:

- Large enough to yield statistically meaningful results

- Representative of the dataset equally a whole. In other words, don't choice a test set with different characteristics than the grooming set

Nosotros tin utilise the following lawmaking to split the data:

Time to train the model! We will use the random forest algorithm hither. So let's go ahead and train the model using the RandomForestClassifier() role:

Parameters:

- n_estimators : The number of trees in the forest

- criterion : The role to measure out the quality of a split. Supported criteria are "gini" for Gini impurity and "entropy" for data gain

Note: These parameters are tree-specific.

We tin can now check the performance of our model on the test set:

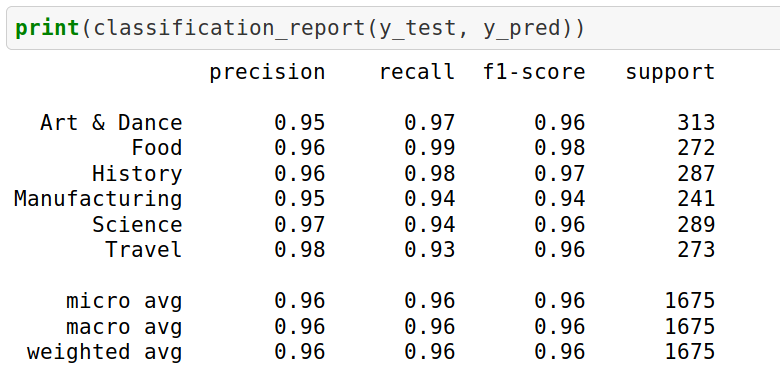

We go an impressive 96.05% accuracy. Our entire process went pretty smoothly! But nosotros're not done yet – we need to analyze our results as well to fully understand what we achieved.

Analyzing the Results

Allow'south check the classification report:

The result will requite the following attributes:

- Precision is the ratio of correctly predicted positive observations to the total predicted positive observations. Precision = TP/TP+FP

- Recall is the ratio of correctly predicted positive observations to all the observations in the bodily class. Recall = TP/TP+FN

- F1 Score is the weighted average of Precision and Think. Therefore, this score takes both simulated positives and faux negatives into business relationship. F1 Score = 2*(Call back * Precision) / (Recollect + Precision)

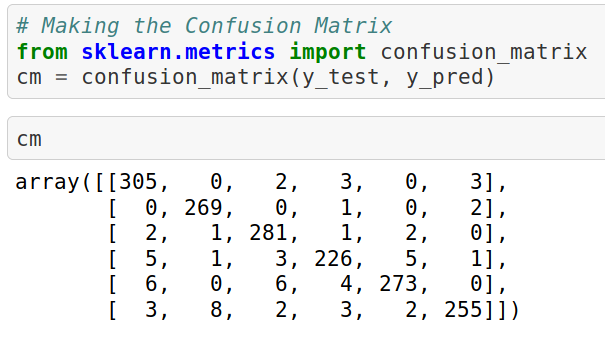

We tin can check our results past creating a confusion matrix as well:

The confusion matrix volition be a 6×6 matrix since we have vi classes in our dataset.

Stop Notes

I've ever wanted to combine my interest in scraping and extracting data with NLP and auto learning. So I loved immersing myself in this projection and penning down my approach.

In this article, nosotros just witnessed Selenium'due south potential equally a web scraping tool. All the code used in this article israndom forest algorithm Congratulations on successfully scraping and creating a dataset to classify videos!

I look frontwards to hearing your thoughts and feedback on this commodity.

Source: https://www.analyticsvidhya.com/blog/2019/05/scraping-classifying-youtube-video-data-python-selenium/

Posted by: maggardrembed83.blogspot.com

0 Response to "How To Handle Youtube Site With Selenium"

Post a Comment